Whisker Human Machine Interface

Designing the Human-Machine Interface for the Litter-Robot Platform

At Whisker, I led the human-machine interface strategy for the next generation of Litter-Robot products, including Litter-Robot 5, Litter-Robot 5 Pro, and Litter-Robot Evo.

These robots operate at the intersection of hardware, software, and everyday pet care routines. The interface needed to be immediately understandable, durable in a home environment, and capable of supporting a growing connected ecosystem.

My work focused on defining the physical interface architecture, collaborating with engineering and industrial design on hardware decisions, and aligning the robot interface with the broader product ecosystem.

Simplifying the Robot Experience

Previous generations of the Litter-Robot exposed a number of configuration options directly on the device through combinations of physical button interactions.

While flexible, many of these interactions were difficult to discover and often required consulting documentation.

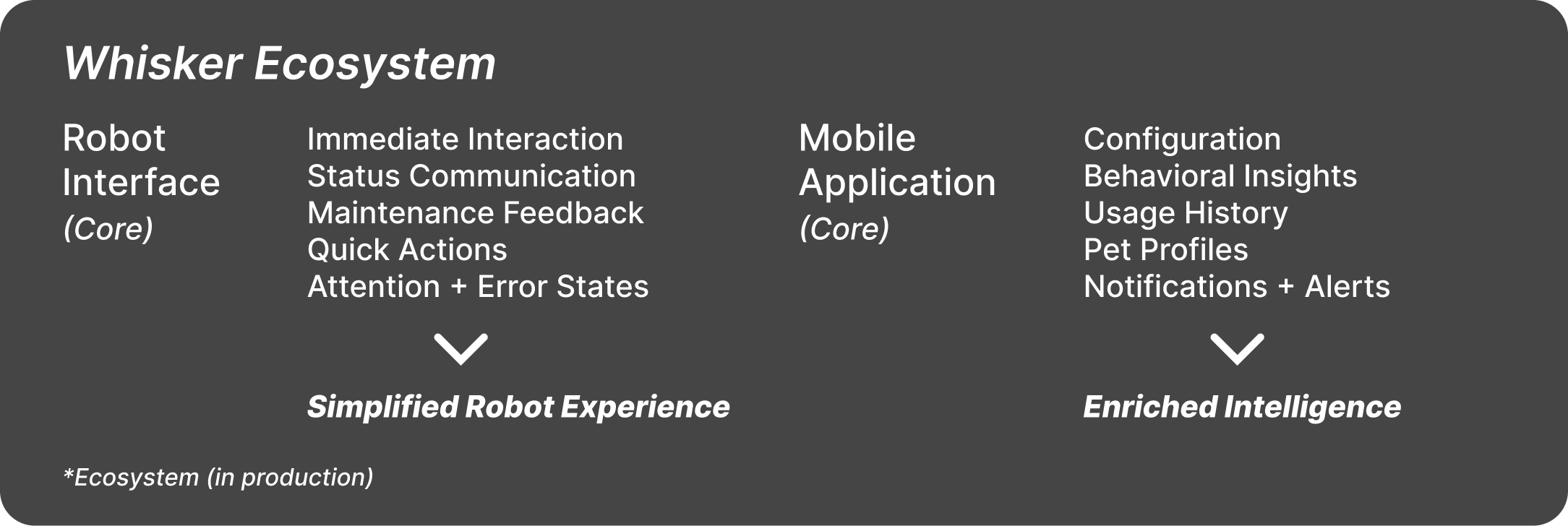

As the mobile application matured, many of these configuration capabilities were already available through the connected experience. Rather than duplicating that complexity on the robot itself, I advocated for simplifying the physical interface and allowing the mobile application to handle deeper configuration.

Examples of settings that were removed from the physical robot interface included:

• Sleep mode configuration

• LED lighting controls

• Control panel lock

• Cycle delay configuration after a cat exits the unit

These capabilities remained fully accessible through the mobile application.

This approach simplified the physical robot while allowing the connected experience to handle more advanced configuration and insights.

For many owners, the robot functions primarily as a utility device. They set it up once, periodically refill litter, and empty the waste drawer when needed. Those users rarely interact with advanced settings directly on the device.

By focusing the robot interface on essential actions and clear status communication, the experience became easier to understand while still supporting deeper functionality through the connected ecosystem.

Designing the Hardware Interface

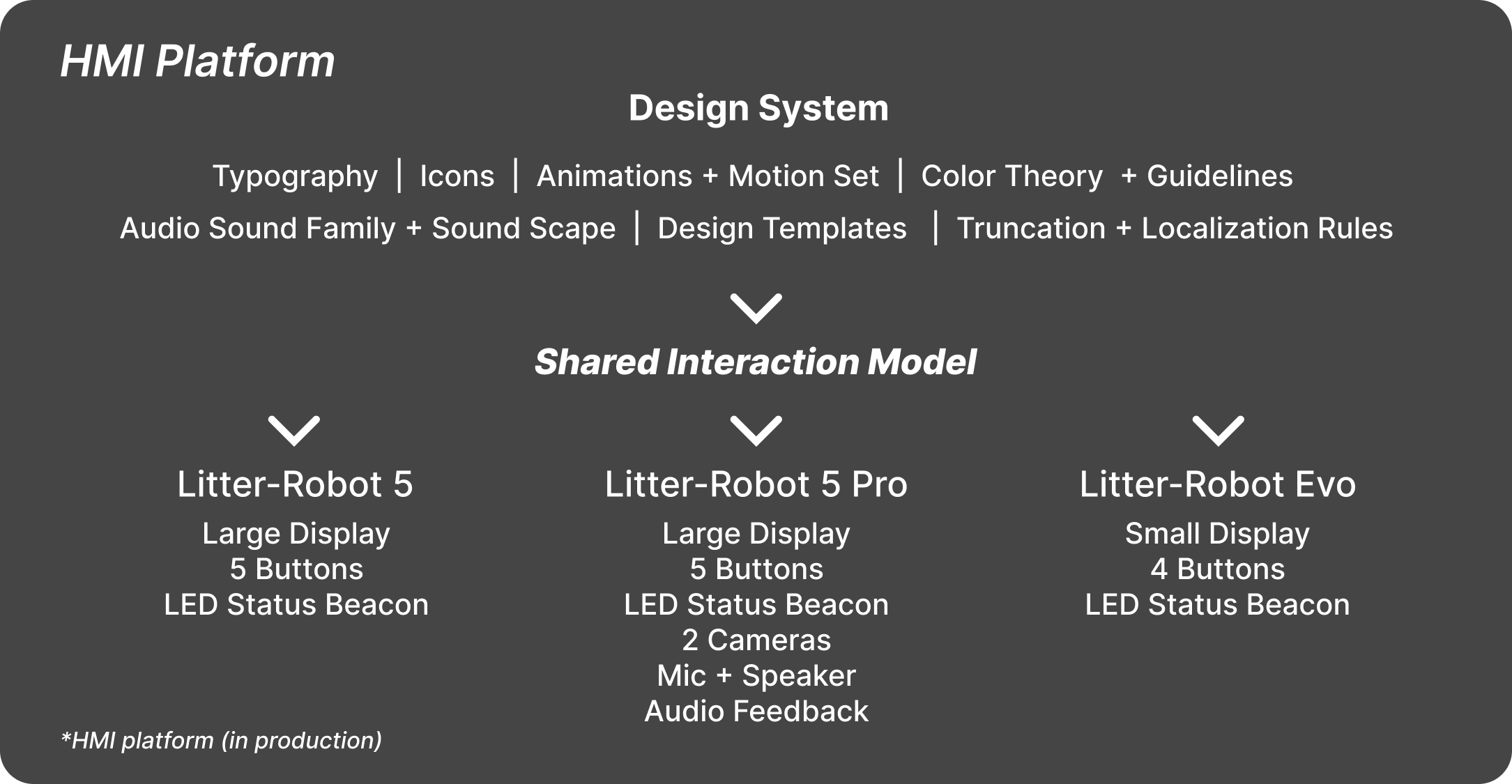

Across the Litter-Robot 5 platform I helped define the HMI hardware architecture, including display configurations, button layouts, and component selection.

To support multiple product tiers, I championed two display configurations across the lineup.

Litter-Robot 5 and Litter-Robot 5 Pro used a larger display paired with five physical buttons, while Litter-Robot Evo used a smaller display with a four button layout as part of its cost-optimized design.

Working closely with engineering and industrial design, I evaluated display components based on pixel pitch, brightness, viewing angle, and manufacturing cost. These decisions were negotiated through the bill of materials to balance usability and product constraints.

Despite the hardware differences between models, the interaction model and visual language remained consistent across the platform.

Redesigning the Attention Beacon System

Earlier versions of the Litter-Robot relied on combinations of LEDs and flashing patterns to communicate system states. While technically flexible, the signaling system could be difficult to interpret without referencing documentation.

For the Litter-Robot 5 platform I redesigned the status system into a simplified hierarchy built around a single LED indicator positioned beside the display.

Each color represents a clear level of urgency:

White

System ready and operating normally.

Blue

Setup and configuration states such as onboarding or firmware updates.

Green

Active use states such as when a cat is detected inside the robot.

Yellow

Non-critical attention states such as low litter levels or connectivity issues.

Red

Critical errors that prevent the robot from operating and require attention.

This simplified structure allowed owners to quickly understand the robot’s status at a glance.

Establishing the Embedded Design System

The Litter-Robot 5 platform required a dedicated design system tailored to embedded displays.

Unlike mobile or web environments, the robot interface must account for viewing distance, lighting conditions, and hardware constraints.

My team and I conducted extensive testing to determine optimal iconography, font sizes, layout structures, and animation behavior across the robot displays.

The resulting embedded design system defined:

• Typography hierarchy

• Layout and truncation rules

• Localization considerations

• Developer templates for engineering implementation

This work ensured visual consistency while giving engineering clear guidance for implementation.

Introducing Audio Interaction on Litter-Robot 5 Pro

Earlier Litter-Robot models did not include audio feedback because the hardware platform did not include a speaker.

With Litter-Robot 5 Pro, the addition of a camera system introduced a microphone and speaker. This allowed owners to view their pets through the mobile application and communicate with them directly through the robot.

Because this was the first Litter-Robot capable of producing sound, we designed a structured audio system built around sound families.

We began by testing individual tones to establish baseline responses, then grouped sounds into families representing confirmations, alerts, and critical warnings.

This approach ensured audio feedback remained subtle and appropriate for a device operating continuously in a home environment.

Establishing the HMI Framework

When I joined Whisker there was no structured process for designing and validating the robot interface.

I worked across engineering, product management, and industrial design to establish a framework for how the robot communicates visually, audibly, and through motion.

Over time this structure created a clear point of ownership for HMI decisions and simplified cross-functional collaboration across the organization.

Impact

The Litter-Robot 5 platform introduced a simpler and more intuitive robot interface while supporting the continued growth of the connected ecosystem.

By focusing the hardware interface on essential interactions and status communication, the robot became easier to understand while allowing the mobile application to handle deeper configuration and insights.

This approach balanced usability, hardware constraints, and long-term platform scalability across multiple product tiers.

Disclosure

Selected images, video content, and application screen captures are the property of Whisker and are used here for portfolio purposes only.

All work shown was created during my employment at Whisker and reflects collaborative efforts with cross-functional teams.

Only materials that have been publicly released or are currently available in the public version of the Whisker mobile application are included. No confidential or proprietary information is disclosed.

All trademarks, product names, and brand elements are the property of their respective owners.